Building an Agent Operations Platform in One Session

One YouTube video, one insight, one session. What started as a note about persistent agent expertise turned into a full agent operations platform: 22 specialist roles, behavioral health monitoring, directive lifecycle tracking, and an E2E proof that closed in 8 minutes.

⊕ zoom

⊕ zoomThe insight was simple: agents that don't remember anything are expensive to operate.

That's the thing that landed from a YouTube video on multi-team agentic coding. Not the frameworks, not the tooling — the observation that every session starting from zero is a productivity tax you pay indefinitely. The video was demonstrating how teams of agents coordinate across repositories. The subtext was the part I couldn't stop thinking about: the agents had no institutional memory. Same mistakes, same context-loading overhead, same startup cost every single time.

I've been building in this space long enough to recognize when a single insight has compounding implications. This one implied a whole layer of infrastructure I hadn't built yet.

So I built it. One session. Here's what happened.

The Starting Point: What Was Already There

Mission Control is the internal portfolio dashboard — 49+ apps across 7 categories, live service status, deployment history. The backend already had a concept of agents in the principal-broker architecture: OpenClaw registers tools and skills, each with a description and routing metadata. But "agent" in that context meant "callable function." There was no concept of an agent as a persistent entity with expertise, history, or behavioral health.

The gap was clear. I was running coordinated multi-agent workstreams — audit swarms, feature teams, security reviews — and the only thing persisting between sessions was the code they produced. The agents themselves were stateless. Each dispatch started from the same blank slate.

That's the architectural debt that the YouTube video made visible.

The Agent Expertise Layer

The first thing I built was the expertise model. Twenty-two specialist roles, each with a defined domain, skill tags, and a persistent memory anchor in the Akashic Records knowledge system.

AGENT_ROLES = {

"backend-dev": AgentRole(

name="Backend Developer",

domain="API design, database schemas, service architecture",

skills=["python", "fastapi", "postgresql", "redis", "testing"],

memory_namespace="agents/backend-dev",

),

"security-analyst": AgentRole(

name="Security Analyst",

domain="Vulnerability assessment, dependency auditing, threat modeling",

skills=["static-analysis", "cve-scanning", "secrets-detection"],

memory_namespace="agents/security-analyst",

),

"trading-specialist": AgentRole(

name="Trading Specialist",

domain="Signal engineering, position sizing, risk management",

skills=["polymarket", "signal-calibration", "backtesting"],

memory_namespace="agents/trading-specialist",

),

# ... 19 more roles

}

The memory namespace is the key piece. Every agent role maps to a namespace in the Akashic Records vector store. Lessons from past sessions — what approaches failed, which APIs have gotchas, what the project conventions are — persist under that namespace and get surfaced at dispatch time. An agent picking up a security audit today inherits the context from the last three security audits without any human having to re-brief it.

This is compound expertise. The agent gets smarter across sessions even though it's stateless within one.

The Teams Dashboard

Once I had the expertise model, I needed visibility. The Mission Control frontend got a new Teams tab: a full roster view of all 22 agent roles with live memory counts, recent directive history, and health status.

The component pulls from two sources: the expertise registry (static role definitions) and the Akashic Records API (live memory counts per namespace). When a namespace has zero memories, the agent is marked as a junior — no institutional knowledge yet. As memories accumulate from completed directives, the agent's experience level updates automatically.

interface AgentCard {

role: string

name: string

domain: string

skills: string[]

memoryCount: number

experienceLevel: "junior" | "mid" | "senior" | "expert"

recentDirectives: DirectiveSummary[]

healthStatus: AgentHealthStatus

}

The experience thresholds are simple: 0 memories = junior, 5+ = mid, 20+ = senior, 50+ = expert. Arbitrary, but it gives operators a fast read on which agents have accumulated meaningful context and which are starting fresh.

The Teams dashboard isn't for the agents — they don't have UIs. It's for the operator. Full roster visibility changes how you dispatch. When you can see that the security analyst has 34 memories from prior audits and the trading specialist has 2, you make different routing decisions.

Wiring the Principal-Broker

The principal-broker is the routing layer in Mission Control's backend. OpenClaw registers skills here; the broker routes incoming requests to the right handler based on intent classification.

I registered 10 team skills as first-class tooling agents:

TEAM_SKILLS = [

SkillRegistration(

name="audit-swarm",

description="Multi-agent code audit across domain specialists",

agent_role="security-analyst",

dispatch_strategy="parallel",

max_concurrent=5,

),

SkillRegistration(

name="feature-team",

description="3-agent feature implementation (backend + frontend + QA)",

agent_role="full-stack",

dispatch_strategy="coordinated",

max_concurrent=3,

),

SkillRegistration(

name="trading-bot-health",

description="Test suites, calibration metrics, regression diagnosis for trading bots",

agent_role="trading-specialist",

dispatch_strategy="sequential",

max_concurrent=1,

),

# ... 7 more

]

The dispatch strategy field tells the broker how to handle parallelism. Audit swarms run up to 5 concurrent agents against different domains. Feature teams coordinate sequentially with handoff points. Trading bot health runs single-agent to prevent state conflicts on shared position data.

Each registration also carries a routing weight — the probability that an incoming directive matching that skill's keywords should be routed here versus escalated to the human operator. High-confidence, well-scoped tasks go straight to dispatch. Ambiguous or high-stakes directives queue for human review.

Directive Lifecycle Tracking

Every agent dispatch is now a directive with a full lifecycle:

CREATED → QUEUED → DISPATCHED → IN_PROGRESS → COMPLETED | FAILED | ESCALATED

The directive record captures: who issued it, which agent role received it, the initial brief, the dispatch timestamp, intermediate status updates, the completion artifact (PR URL, findings report, etc.), and the final outcome classification.

@dataclass

class Directive:

id: str

issuer: str # "openclaw" | "knox" | "scheduled"

agent_role: str

skill: str

brief: str

status: DirectiveStatus

created_at: datetime

dispatched_at: Optional[datetime]

completed_at: Optional[datetime]

artifact_url: Optional[str]

outcome: Optional[DirectiveOutcome]

escalation_reason: Optional[str]

health_events: List[HealthEvent]

The health_events list is where behavioral monitoring hooks in — more on that in a moment.

The Mission Control UI shows the full directive queue: pending, in-flight, completed, and failed. Each directive card expands to show the full timeline, the agent's intermediate updates, and the completion artifact. For a PR-producing directive, that's a direct link to the GitHub PR with the diff.

Structured Reporting and CEO Triage

The reporting layer is where I spent the most time getting the design right.

The naive approach is to surface everything to the human operator and let them decide. That doesn't scale. When you're running 20+ active directives across a fleet, every decision that reaches the operator is a coordination failure — it means the system couldn't resolve it autonomously.

The right model is a triage engine with deterministic auto-resolve rules. The CEO (in this context: me, or OpenClaw acting as the executive agent) only sees what genuinely requires a decision.

TRIAGE_RULES = [

# Auto-resolve: CI passes, coverage maintained, no security regressions

TriageRule(

condition=lambda d: (

d.ci_status == "passing"

and d.coverage_delta >= 0

and d.security_findings == 0

),

action="auto_merge",

confidence=0.95,

),

# Auto-escalate: any security finding rated HIGH or CRITICAL

TriageRule(

condition=lambda d: any(

f.severity in ("HIGH", "CRITICAL") for f in d.security_findings

),

action="escalate_immediate",

confidence=1.0,

),

# Auto-resolve: coverage drop within tolerance, no failures

TriageRule(

condition=lambda d: (

d.coverage_delta >= -2.0 # max 2% drop allowed

and d.test_failures == 0

and d.ci_status == "passing"

),

action="auto_merge",

confidence=0.85,

),

# Escalate: unknown state — missing artifacts, timeout, or parse failure

TriageRule(

condition=lambda d: d.outcome == DirectiveOutcome.UNKNOWN,

action="escalate_review",

confidence=0.75,

),

]

The rules evaluate in priority order. First match wins. If no rule matches, the directive goes to the default escalation queue.

The CEO report aggregates the completed cycle: how many directives ran, how many auto-resolved, how many escalated, P0 findings requiring immediate attention, and a digest of the artifacts produced. It's a Slack message that takes 30 seconds to read and gives you a complete picture of the fleet's output.

The design goal of a triage engine: The human should only see decisions that require human judgment. Everything else is noise that trains the operator to stop reading the reports. Auto-resolve the deterministic cases. Escalate the ambiguous ones. Never mix them in the same queue.

Behavioral Health Monitoring

This was the part I hadn't planned to build but ended up being the most technically interesting.

When you're running multi-agent workstreams, agents fail in non-obvious ways. Not just "wrong output" or "test failure" — those are easy to detect. The failure modes that cost you the most are behavioral:

Hallucination drift — the agent confidently producing outputs that look correct but contain fabricated file paths, function names, or API behaviors that don't exist. Catches it at the diff stage, not at dispatch time.

Doom spirals — the agent detecting a problem, attempting a fix, the fix failing, attempting a different fix, that also failing, cycling indefinitely. No progress, escalating confidence scores, resource consumption compounding.

Stale execution — the agent producing output that passes automated checks but is based on a stale understanding of the codebase. The test suite passes because the tests don't cover the affected code, not because the change is correct.

The health monitor attaches to directive execution and watches for signals:

HEALTH_SIGNALS = {

"hallucination_risk": [

Signal("nonexistent_import", pattern=r"from \S+ import \S+", validator=validate_import_exists),

Signal("phantom_function_call", validator=validate_function_exists_in_codebase),

Signal("fabricated_api_response", validator=check_response_matches_schema),

],

"doom_spiral": [

Signal("repeated_approach", detector=detect_repeated_fix_pattern, threshold=3),

Signal("escalating_scope", detector=detect_scope_expansion, threshold=2),

Signal("circular_dependency", detector=detect_circular_fix_chain),

],

"stale_execution": [

Signal("test_coverage_gap", validator=check_affected_paths_have_coverage),

Signal("outdated_schema_reference", detector=detect_schema_drift),

Signal("branch_divergence", detector=check_branch_freshness, max_age_hours=24),

],

}

When a health signal fires, it creates a HealthEvent on the directive record. Three events of the same type within a directive's lifecycle trigger an automatic pause: the agent is halted, the event is logged, and the directive is escalated with the health events as context.

The operator sees: directive paused, reason: doom_spiral (3 repeated_approach events), last attempted fix: [summary]. That's actionable. You can read the fix attempts, identify the misunderstanding, update the brief, and re-dispatch with corrected constraints.

Doom spirals without detection compound exponentially. An agent that's stuck will spend 10x the token budget of a successful run trying variations on a broken approach. The health monitor isn't just quality control — it's cost control.

E2E Proof: Directive to Findings in 8 Minutes

Theory without demonstration is design fiction. So I ran it.

I issued a directive through Mission Control's new interface:

Directive: Run comprehensive code audit on ask-knox

Agent role: security-analyst

Skill: audit-swarm

Brief: Full audit of ask-knox repository. Cover security,

architecture, test coverage, and performance.

Generate MASTER-SUMMARY.md with P0/P1/P2 classifications.

The audit-swarm skill dispatched 5 parallel agents: a static analyzer, a dependency auditor, a threat modeler, a test coverage analyst, and an architecture reviewer. Each agent ran against the same codebase from its domain perspective, then a synthesis agent aggregated findings and classified them.

Results:

- 61 findings across all domains

- 8 minutes wall-clock from directive dispatch to MASTER-SUMMARY.md committed

- P0: 3 (critical — immediate action required)

- P1: 14 (high — fix before next deploy)

- P2: 44 (medium/low — backlog)

The triage engine evaluated the output: no critical security findings in the auto-merge category, P0 findings present, escalated to CEO review. I got a Slack message with the summary and the MASTER-SUMMARY.md link within 30 seconds of the swarm completing.

That's the loop closing. Directive issued → agents dispatched → findings produced → triage evaluated → human notified with exactly the information they need to act.

What This Architecture Actually Gives You

The InDecision Framework is built on one premise: remove friction from the decision that matters, eliminate the decisions that don't. That's exactly what this platform does at the infrastructure layer.

Before: every agent dispatch was a manual operation. I set up the tools, wrote the brief, monitored the output, made the triage decision, merged or escalated. Every directive was a full-attention operation.

After: directives flow. OpenClaw issues them from cron jobs and event triggers. The broker routes them to the right specialist. The health monitor catches failure modes before they compound. The triage engine resolves what it can. I see the things that need me and nothing else.

The expertise layer means agents get better over time. The directive lifecycle means every operation is auditable. The health monitoring means fleet reliability is observable. The CEO triage means the operator's attention is protected.

None of these are hard problems in isolation. The value is the integration — a coherent system where the primitives compose correctly.

What's Next

The reporting layer needs richer attribution — I want to see per-agent memory utilization rates and identify which role namespaces are growing fastest (signal of which agents are doing the most work). The health monitor needs calibration: the current thresholds are conservative, and I expect false positive rates to be higher than acceptable until I have enough real data to tune them.

The bigger structural work is feedback loops. When a directive auto-resolves, that outcome — the full context, the approach that worked — should propagate back to the agent's memory namespace. Currently, only explicit post-session retros write to Akashic. Automatic memory accumulation from resolved directives would close the compound expertise loop without requiring human intervention.

That's the system I'm building toward: one where each successful directive makes the next one marginally cheaper, and that marginal improvement compounds indefinitely across the fleet.

The session was 6 hours. The architecture is live. The proof ran in 8 minutes.

That's the pace you can operate at when the infrastructure is right.

Agent orchestration is covered end-to-end in the Multi-Agent Systems track. 11 lessons.

Start the Multi-Agent Systems track →Explore the Invictus Labs Ecosystem

Follow the Signal

If this was useful, follow along. Daily intelligence across AI, crypto, and strategy — before the mainstream catches on.

The Bot That Had Never Made a Dollar

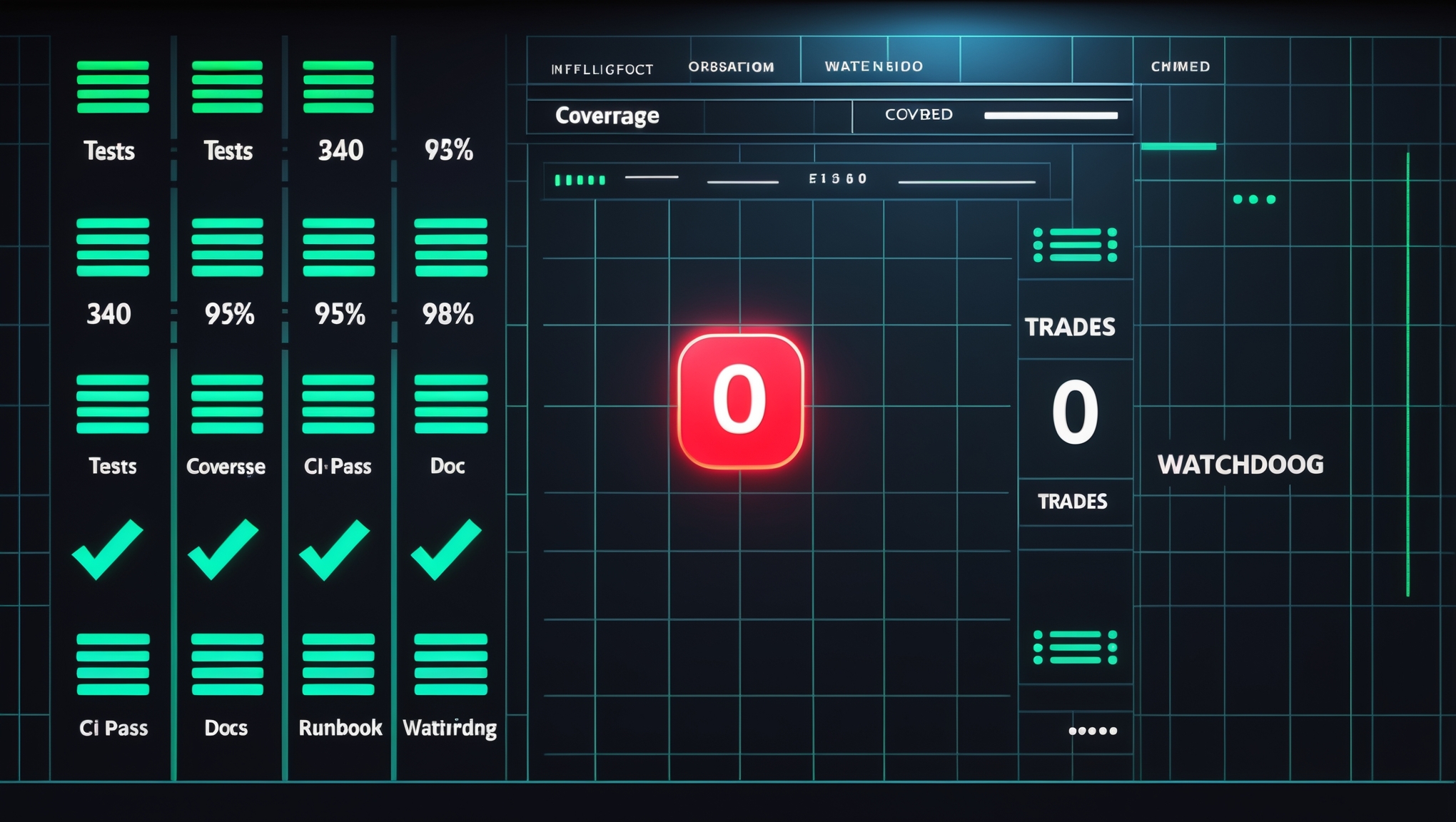

Three hundred and forty tests. Ninety-five percent coverage. Five docs, a runbook, a watchdog, and a launchd plist. In three weeks it had never placed a real trade. This is what we learned when we finally pulled the data instead of writing another fix.

Why I Built Blueprint: The Framework I Wish I Had

After 16 years of building products, leading teams, and watching smart people ship the wrong thing confidently, I built the AI tool I always needed. This is why Blueprint exists.

The Bot That Never Blinks: Zero-Downtime Hot Reload for Live Trading Systems

Most trading bots treat deployment like a surgery that requires general anesthesia. Foresight doesn't go under anymore. Here's the architecture that made that possible.